I see many people complain about websites crashing and becoming inoperable as governments rush out IT systems to support vaccinations. People wonder: how does this happen? You might gripe: websites normally don’t crash like that so these new ones must be terrible. Let me explain why that’s not necessarily the case.

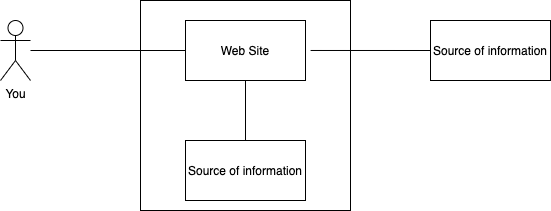

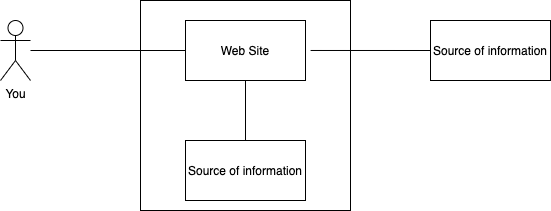

Let’s talk about the diagram above. Websites are made of software running within an environment. (An environment can be a physical computer in a data center, or it could be several computers working together, or even a mainframe. In this diagram it is the box around the web site box). You use an app or your browser to send a request through the Internet to the web site for information. The software that makes up the web site provides you with the information in a response. Sometime the source of information is from the same environment, other times it has to go outside the environment to get the information. For example, you might go to a web site and click on a link to get store hours: that is your request. The website sends a response that is a web page with the store hours. Another time you might send a request to a site to give you all your account information. In that case the web site might go get that source of information from a number of different systems outside the environment and then send you a response with all your account information.

Where it often starts to break down is when too many requests come in for the web site to handle. There are a number of reasons for that. As requests come in, web site software will sometimes need to use up more resources like CPU or memory to respond to the increasing number of requests. Sometimes the web site software will ask for more resources than the environment allows. When that happens, the software might fail, just like a car running out of gas fails. Now people monitoring the software might bring it back up again but if the requests are still coming in too fast, the same problem reoccurs. Indeed, it’s usually when people give up and the requests subside that the web site software can finally come back up and work without crashing or becoming inoperable.

Not all web site software consumes resources until they crash. Some software will set some limit to prevent that from happening. For example, the software might start quitting before it has a chance to properly respond to save itself from taking up too much resources. The software will send you a response essentially saying it can’t respond properly right now. The software didn’t crash, but you didn’t get the answer you wanted.

One way to prevent this is to get a really big environment to run the web site software. There are two problems with this approach. One is that it can be difficult to know how big this environment should be; this is especially true of new web sites. The other problem is that it can be expensive to pay for that. Imagine buying an 18 wheel truck instead of a minivan just so you can have it for when you have to move your home. That doesn’t make sense. You have all this trucking capacity you don’t normally need. The same is true with website software environments.

Another difficulty can occur when the web site software has to leave the environment to get information. The web site software might have a lot of capacity in the environment, but the other systems it has to go to outside the environment to get the information do not. In that case, the other system can fail or timeout or be very slow. In which case, there is nothing you can do to make the web site better. You cannot just add capacity in the middle if the other systems are capped. The best you can do as the developer of the web site is to find tricks to not ask the back end systems for too much information. For example, if 1 million users are asking for rate information that changes daily, you can ask the backend system for it once and then serve that to the million users that day, rather than asking for the same information a million times.

There are many ways to make web sites resilient and capable of responding to requests. However with enough load they will crash or become inoperable. The job of IT architects like myself is to make the chances of that happening as small as we can. But there is always a chance, especially with new systems with great demand.