I’m always reading about artists in such places as the New Times, the Guardian, Wallpaper and more. These 2 dozen pieces below capture just some of the good things I’ve found in those publications since my last post on the arts in October.

First up, some good pieces from the Times. I highly recommend the first two, on Van Gogh and David.

- Van Gogh and the Meaning of Yellow – The New York Times – www.nytimes.com

- Face to Face With Jacques-Louis David, History’s Most Dangerous Painter – The New York Times – www.nytimes.com

- Fallen Confederate Statues Take Center Stage in the Year’s Boldest Show “Monuments,” a group exhibition in Los Angeles, led by Kara Walker, places contemporary art face to face with statuary removed in the last decade.

Each Friday the Guardian posts a newsletter on the arts, and I have to say, it is consistently rich and full of good things to check out. We are lucky to have such art coverage in such a major publication. Here’s just a small sample of things I found there:

- A Day with David Bowie: how a visit to a psychiatric clinic changed him – and his music | David Bowie | The Guardian – www.theguardian.com

- Ai Weiwei on AI, western censorship and returning home

Wallpaper also has good arts coverage. It tends to be contemporary artists, like these pieces:

- Lexa Gates is a performance artist – but does she know it? | Wallpaper*

- Jean-Michel Basquiat’s works on paper reveal an artist’s technical mastery of his medium

While I love good painters and sculptures, I have a fondness for artists who work in collage and other forms that involve graphic design. Kalman, Heartfield and Kruger are just three examples found below, with some other artists mixed in:

- Artist of the day: Artist of the day, June 12: Tibor Kalman, American graphic designer of Hungarian origin – visualdiplomacyusa.blogspot.com

- John Heartfield, the German Visual Artist Who Pioneered the Use of Art as a Political Weapon ~ Vintage Everyday – www.vintag.es

- Barbara Kruger – Paste Up – London – Sprüth Magers – spruethmagers.com

- The Future Was Then: an Exhibition of Fascist Italian Posters

- Daniel Benneworth–Gray – www.danielgray.com

The following don’t have any particular idea tying them together: I just thought they were interesting and worth a look:

- Seth Rogen’s Ceramics Shouldn’t Be Scrutinized | Artsy – www.artsy.net

- n+1 | n+1 is a print and digital magazine of literature, culture, and politics. – www.nplusonemag.com

(Top image from the piece on Van Gogh, middle image from the work Kalman did for the restaurant Florent in the 1980s).

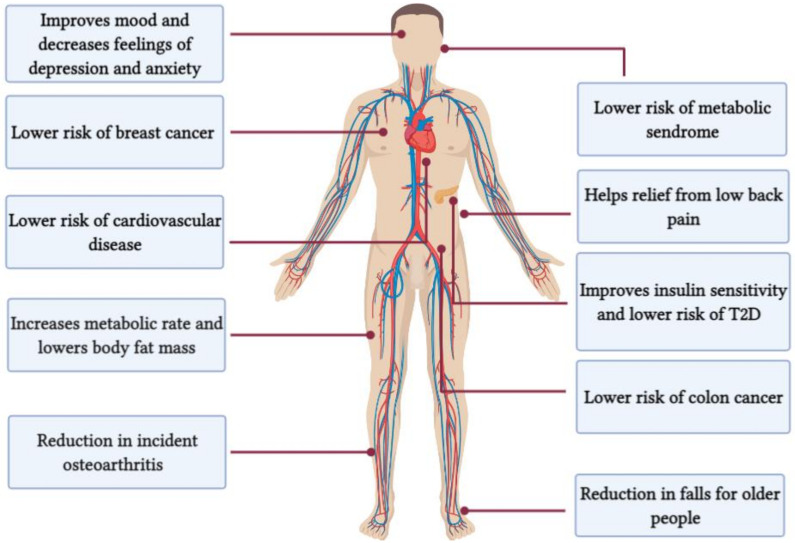

You can find lots of evidence of how HIIT is good for you.

You can find lots of evidence of how HIIT is good for you.

What is the New York Times? Based on those who have NYT Derangement Syndrome**, it’s a newspaper that has betrayed its progressive readers by publishing articles and oped pieces that are centrist or even right wing.

What is the New York Times? Based on those who have NYT Derangement Syndrome**, it’s a newspaper that has betrayed its progressive readers by publishing articles and oped pieces that are centrist or even right wing.

I love the the New York Times, I love Charleston, and I love their 36 hours travel series, so I was keen to read this:

I love the the New York Times, I love Charleston, and I love their 36 hours travel series, so I was keen to read this:

I’ve posted before on

I’ve posted before on

The New York Times does a great job of telling the story of

The New York Times does a great job of telling the story of