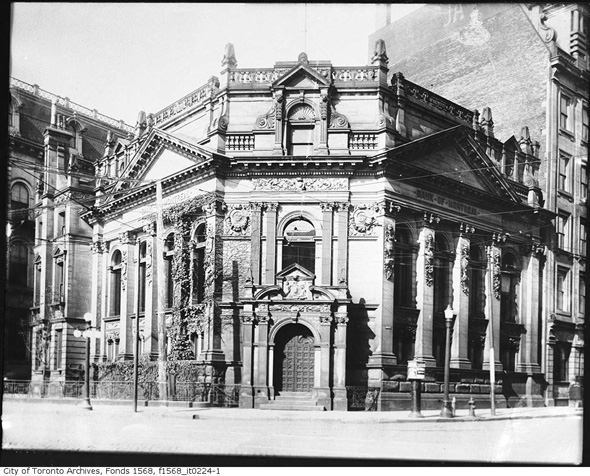

You may only think of transportation in a practical sense of how you travel from A to B. However, there are many hidden assumptions in your travels, including ideas about Comfort, Cost and Convenience. And those three C words got me thinking about another C word, Class.

You may only think of transportation in a practical sense of how you travel from A to B. However, there are many hidden assumptions in your travels, including ideas about Comfort, Cost and Convenience. And those three C words got me thinking about another C word, Class.

I started thinking about our underlying assumptions on transportation when I read this piece: transportation is about bodies, by Navneet Alang. A key quote for me:

“I’m just saying that as a person who spends a lot of time in the suburbs: to “most people” — and I here I don’t mean most people in a generic, metaphorical sense, but in a literal and political sense — bike lanes and transit and so on don’t sound so much like options as much as the ravings of a crazy person. And it all sounds insane because the vast majority of them are concerned about their bodily comfort, and we are asking them to be less comfortable. We are saying what at least sounds to them like “you are going to have face your own body and feel more uncomfortable.” Any approach to changing transportation habits or making the case for why we should has to, in some way or another, deal with that simple fact.”

A light bulb went off when I read that. Transportation is about the best way to get from A to B. The best way can be defined in terms of speed, effort, convenience, comfort and cost. For biking advocates, bikes are the best way in terms of cost and convenience (e.g., easy to park, flexible routes). Automobile advocates think cars are the best way in terms of speed, effort (none), and comfort. For patrons of the public transit like the TTC, it is somewhere in between. In every case, travellers are thinking about their bodies, their physical selves, when they think about travelling. Some subway riders don’t want to be all sweaty when they get to work, and some car owners do not want to be crammed in a bus in winter with sick passengers. Meanwhile bike riders love the idea that their commute makes them physically fit, unlike the feeling they get stuck in a car or a bus. Each sees their means of transportation as the best way, depending on what they value.

Class is an additional way people think about themselves as they commute. This is especially so when they are travelling commercially. On trains and planes and ships there are different classes of passengers, and while they may all get there with the same speed and effort, the comforts and costs and even conveniences differ depending on the classification of your seat.

As for automobiles, in cities where public transportation is lacking, class is assumed based on the type of vehicle you ride. People with expensive cars being of a supposedly higher class, people with beat up cars being a lower class, and riders of bicycles being the lowest class. Which is why you will see people driving cars they cannot really afford: they don’t want their vehicle to indicate in any way a lower class status.

Class is more difficult to discern in cities where public transportation is good. Wealthy people in cities like New York might ditch their expensive car and use the subway because it is faster and more convenient. That is also true with cabs: rich and poor hop in and out of the same yellow cars to go from updown to downtown (and vice versa). In New York and beyond, new transportation options like Uber and Lyft also tend to water down class indicators in terms of transportation. While services like Uber offer levels of class in terms of vehicle selection, you can also randomly get an expensive car with the basic Uber X option. Subways, cabs and Ubers all blur the ability to use someone’s commute as a class indicator.

Class and commuting tend to travel as a pair. I would extend this statement to say by daring to state that bike lane advocates and public transportation advocates are likely to fall in the left wing/progressive side of politics and their views on class tend to mix in with this, even as car advocates are likely to fall in the right wing/conservative side of politics. So when people are advocating for adding or removing bike lanes, they are promoting their ideas on class as much as they are promoting their ideas on the best way to travel. It’s hard to rationally argue for better cities with more bike lanes and congestion pricing with someone who for many years has worked hard and aspired to drive a very expensive car freely all around the city.

If you are going to advocate for certain transportation options, you need to account for speed, effort, convenience, comfort and cost. But you’d be wrong to leave out class: it is an essential element of any decision made when it comes to travel.

(Image of “Planes, Trains, and Autombiles” from Wikipedia, a movie as much about class as it is about transportation, with class being a theme that comes up often in John Hughes’s films.)

I often talk about exercise from a physical point of view, but not from a mental point of view. I thought I should expand my idea of exercise when I came across this quote from G.K. Chesterton:

I often talk about exercise from a physical point of view, but not from a mental point of view. I thought I should expand my idea of exercise when I came across this quote from G.K. Chesterton:

It’s difficult to not think about migrants and natives. So many problems in the world have their roots in who belongs to a place and when. So I was interested to hear about this book: Home Rule – National Sovereignty and the Separation of Natives and Migrants from Duke University Press. The Duke University Press says:

It’s difficult to not think about migrants and natives. So many problems in the world have their roots in who belongs to a place and when. So I was interested to hear about this book: Home Rule – National Sovereignty and the Separation of Natives and Migrants from Duke University Press. The Duke University Press says: If you want to read some thoughtful posts today, I highly recommend

If you want to read some thoughtful posts today, I highly recommend

You may only think of transportation in a practical sense of how you travel from A to B. However, there are many hidden assumptions in your travels, including ideas about Comfort, Cost and Convenience. And those three C words got me thinking about another C word, Class.

You may only think of transportation in a practical sense of how you travel from A to B. However, there are many hidden assumptions in your travels, including ideas about Comfort, Cost and Convenience. And those three C words got me thinking about another C word, Class.

It’s ok to hate August and love February (and vice versa. Or neither.)

It’s ok to hate August and love February (and vice versa. Or neither.)

Waste is a failure of imagination. Woodworkers know that especially. Good wood workers will try and

Waste is a failure of imagination. Woodworkers know that especially. Good wood workers will try and

I have come across the idea of

I have come across the idea of