It’s an exciting time to be a software developer. Exciting and weird.

It’s exciting because of what developers are capable of doing with agentic AI tools from companies like Anthropic. It’s weird because as Clive Thompson writes: “In the era of A.I. agents, many Silicon Valley programmers are now barely programming”. Yet while programmers may be barely programming, they are still producing code.

Indeed, not only are they producing code, they are producing A LOT of it. Take this example in this other piece by Mike Isaac and Erin Griffith:

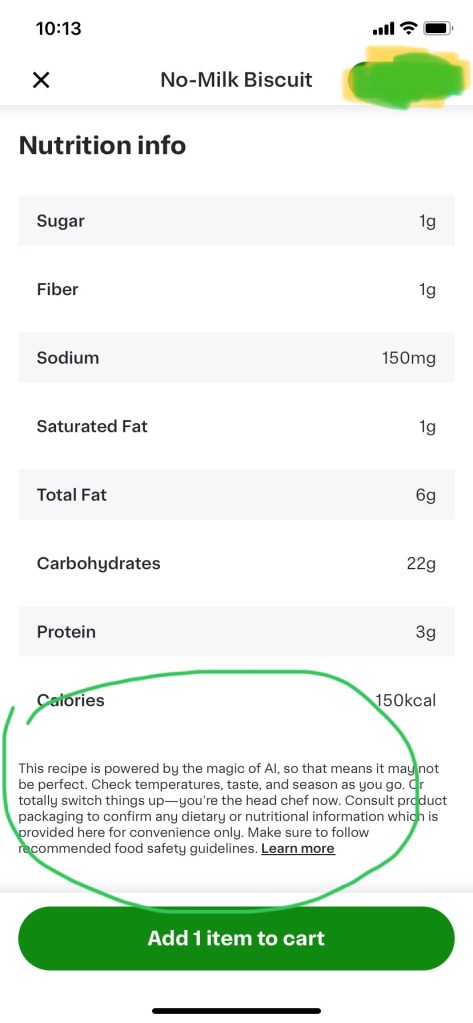

When a financial services company recently began using Cursor, an artificial intelligence technology that writes computer code, the difference that it made was immediate.

The company went from producing 25,000 lines of code a month to 250,000 lines. That created a backlog of one million lines of code that needed to be reviewed, said Joni Klippert, a co-founder and the chief executive of StackHawk, a security start-up that was working with the financial services firm.

And it’s not limited to senior programmers. As Isaac and Griffith write:

“The blessing and the curse is that now everyone inside your company becomes a coder,” said Michele Catasta, the president and head of A.I. at Replit, an A.I. coding start-up in Foster City, Calif.”

It all sounds great: who wouldn’t want such a productivity gain? Alas, there’s a problem. Isaac and Griffith add:

““The sheer amount of code being delivered, and the increase in vulnerabilities, is something they can’t keep up with,” she said. And as software development moved faster, that forced sales, marketing, customer support and other departments to pick up the pace, Ms. Klippert added, creating “a lot of stress.”

A lot of code means a lot of stress. Not just for sales, marketing and customer support teams, either. I suspect it’s also causing plenty of stress for testing, operations and application support teams in organization that rely on a DevOps lifecycle for software development.

To deal with such stress, any organization that adopts AI technology to write code and also uses DevOps needs to consider their entire software development lifecycle in light of such code. AI generated code, no matter how fast it is coded, will have limited value if it’s not reviewed and tested properly. It will have limited value if it is not written in a way that it can be supported by applications and operations. Not to mention it will be hard for others to fix and enhance it later if it is not built and deployed using agreed upon processes by the organization.

To avoid creating unsupportable code with problems that will accelerate technical debt, developers of all levels need to know more about their code than “it works on their machine”. If such developers expect others within their organization to use their code, they must write code that doesn’t impact their organization’s DevOps lifecycle. They must take the time to write AI generated code that is supportable. That additional effort by people generating the new AI code is essential if organizations are going to survive the code tsunami that is coming.

The good news, I think, is that everyone that participates in the DevOps lifecycle will be able to devise ways to support AI developers such that their code will fit in nicely. After all, as this piece by IBM on the DevOps lifecycle explains: “The DevOps process describes how software moves from ideation, through production and feedback, and back to ideation, with development and operations teams working as a single, collaborative unit.” Organizations need the new AI supported developers working with others as a single collaborative unit to succeed. If they do, the new productivity will go from something that causes stress to something that causes success.

(The opinions expressed above are mine only and not necessarily those of my employer.)

If you do anything with AI, then knowing how to write a good prompt is essential. It doesn’t matter if you’re doing complex vibe coding or merely sending a question to ChatGPT or Google Gemini or Microsoft CoPilot, the prompt you use is the key to getting good responses.

If you do anything with AI, then knowing how to write a good prompt is essential. It doesn’t matter if you’re doing complex vibe coding or merely sending a question to ChatGPT or Google Gemini or Microsoft CoPilot, the prompt you use is the key to getting good responses.

Food bloggers are seeing a drop in traffic as home cooks turn to AI, according to

Food bloggers are seeing a drop in traffic as home cooks turn to AI, according to

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24390468/STK149_AI_Chatbot_K_Radtke.jpg)

Yep, it’s true. If you have some technical skill, you can download this repo from github:

Yep, it’s true. If you have some technical skill, you can download this repo from github:

I have been arguing recently about the limits of the current AI and why it is not going to take over the job of coding yet. I am not alone in this regard. Clive Thompson, who knows a lot about the topic, recently wrote this:

I have been arguing recently about the limits of the current AI and why it is not going to take over the job of coding yet. I am not alone in this regard. Clive Thompson, who knows a lot about the topic, recently wrote this: